THE NON-DESIGNER'S TYPE BOOK

Adobe PageMaker.....

PageMaker includes built-in shortcuts for some of the most commonly used special

characters so you don't have to type the Alt codes (but you can always use any of the Alt

codes if you choose in PageMaker). In addition, there is information below about other

special PageMaker features that help in the typographic world.

Character Type it this way What is it?

i

Alti

opening single quote

1

Alt]

apostrophe, closing single quote

и

Alt Shift [

opening double quote

я

Alt Shift ]

closing double quote

-

Alt - (hyphen)

en dash

—

Alt Shift - (hyphen)

em dash

®

Alt r

registration symbol

©

Alt g

copyright symbol

•

Alt 8

bullet

ä Alt 6 section symbol

Non-breaking spaces

These spaces of varying width do not break; that is, the computer does not see them as word spaces

at the end of a line, so if a non-breaking space is between two words, those two words will never separate.

Also use these spaces to make quick indents, consistent spacing in a line of items, etc.

em space (width of point size) Control Shift M

en space (half of an em space) Control Shift N

thin space (quarter of an em space) Control Shift T

non-breaking regular space Control Alt Spacebar OR Control Shift H

Special hyphens

Type the discretionary hyphen between syllables in a word when you want to hyphenate a word at the end of a

line, but you want the hyphen to disappear if the text gets edited. You can also type this invisible character at

the beginning of a word, and that word will never hyphenate.

- (discretionary hyphen) (v6.5) Control Shift Hyphen (v6 or v5) Control Hyphen

Type the non-word-breaking hyphen between hyphenated words so those words will never separate, even at

the end of a line. It will look just like a regular hyphen. You might want to use it in phone numbers.

- (non-word-breaking hyphen) Control Alt Hyphen

Kerning

Position insertion point between two characters, or select a range of characters. Then:

amount delete space add space

.001 (thousands of an em) type in negative amount type in positive amount

in the control palette (-.003) in the control palette 1.007)

.01 (hundredths of an em) Control Alt LeftArrow Control Alt RightArrow

.04 (quarter of an em) Alt LeftArrow Alt RightArrow

OR Control Backspace OR Control Shift Backspace

Remove all kerning Select text, press Control Alt К

APPENDIX C: FONT UTILITIES

227

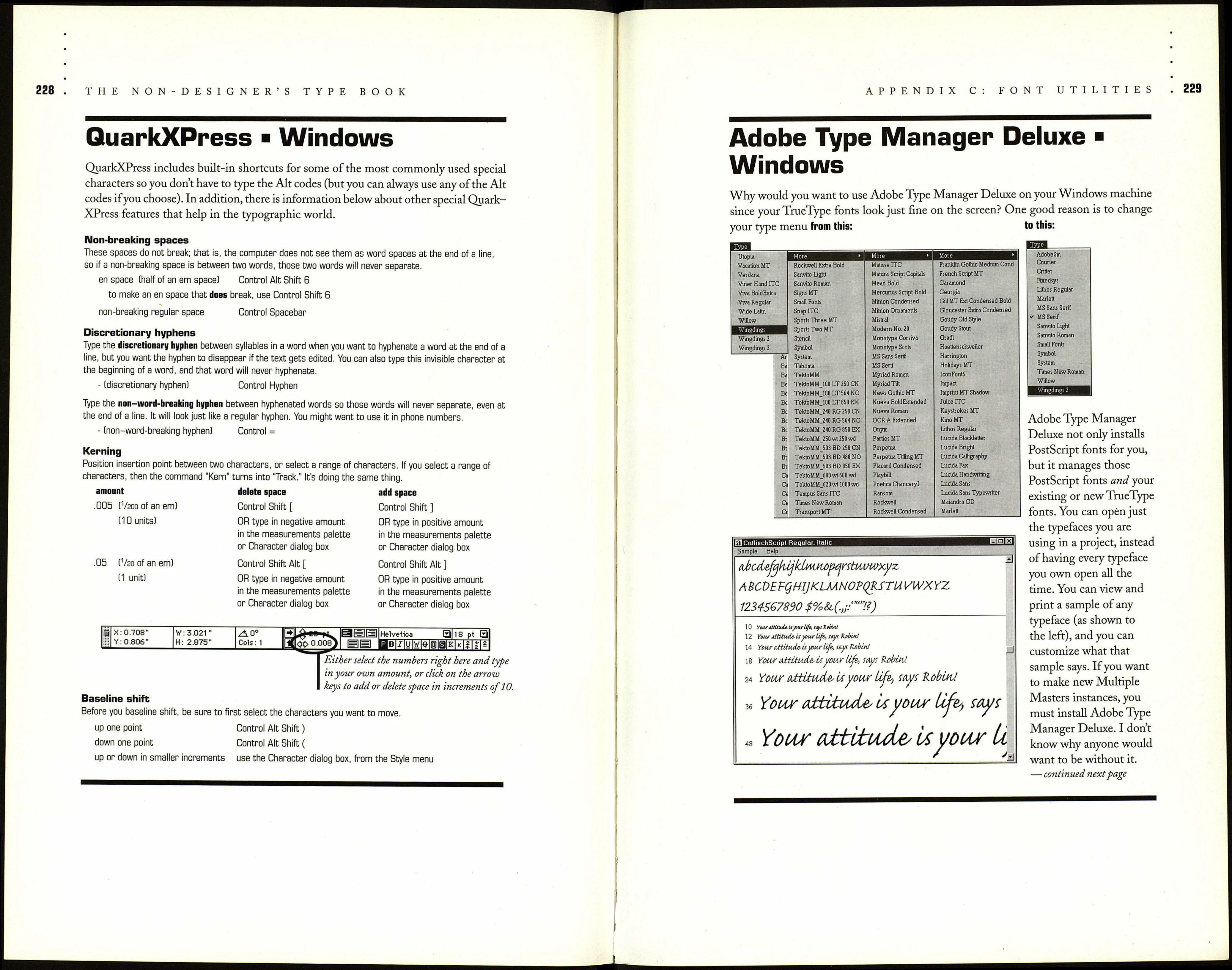

Windows

Baseline shift

The baseline shift amount in the control palette (shown below) is determined by the amount you set in the

General Preferences (shown farther below). Hold down the Shift key to nudge by ten times the amount set

in Preferences. That is, if your nudge amount is set at 1 point, Shift-nudge would move items in 10-point

increments.

Before you baseline shift, be sure to first select the characters you want to move.

Ш ІРІШ |S an vito Roman

jjj інІв|/|у|вІе|с|сГ

12 ■

14.4 ■

Either enter an amount here in this box, \

or click the up and down arrow keys.

Preferences

Measurements in: Picas

Vertical ruler: Picas

n г

points

Layout problems: Г" Show loose/tight lines

V Show "keeps" violations

Graphics display: Г Gray out

(• Standard

_________________Г High resolution_________

Control palette -

Horizontal nudge: Op.1

Picas

и

Vertical nudge: Op.1

Picas

"3

OK

Cancel

Г Use "Snag to" constraints

More...

Map fonts..

CMS setup..

Save option: <• Faster

С Smaller

Guides: ^ Front

Г Back

The amount you enter

in these boxes, plus

the measurement

system you choose here

(the current system is

"Picas, ") determines

how much the base¬

line gets nudged.